For years, interacting with AI meant typing into a box… and waiting.

You type..You edit..You rephrase..You hit enter… Then wait again.

It works – but it never felt natural.

Humans don’t communicate like that. We speak. We interrupt. We change thoughts mid-sentence. We react in real time.

Now, AI is finally catching up.

Voice, video and real-time multimodal intelligence are replacing text-based interaction and this shift is bigger than most people realize. It’s not just a new feature. It’s a complete change in how humans and machines work together.

And it’s quietly challenging the dominance of assistants like Siri and Alexa.

The Friction of Text and the Fall of Legacy Assistants

Typing creates friction in text-based AI interactions. The average person speaks around 150 words per minute but types only 30–40 words per minute, which means we slow down our thinking just to communicate with AI. As a result, we shorten sentences, remove context, simplify ideas and lose emotion and urgency. By the time the AI responds, the moment often feels gone. Now imagine a different experience: you speak naturally, the AI understands your tone, it sees your screen, remembers context, and responds instantly. At that point, it’s no longer just a chatbot, it becomes an intelligent assistant. And this is exactly where the next AI battleground begins.

Why Siri and Alexa Are No Longer Enough

Voice assistants like Siri and Alexa were revolutionary when they launched. They introduced hands-free computing. But they were built for commands, not conversations.

You can say:

- “Set alarm”

- “Play music”

- “What’s the weather”

But try something complex:

- “Summarize this meeting while translating to Spanish”

- “Join this call and take notes”

- “Analyze this document and suggest next steps”

They struggle.

Why?

Because legacy assistants rely on scripted intent recognition, not real reasoning. They don’t understand the deeper context. They don’t remember conversations. They don’t operate across enterprise workflows.

And businesses today need far more than simple commands.

They need AI that can listen, understand, decide and act all in real time.

The Shift: From Smart Speakers to Intelligent Voice Agents

The transition from “Smart Speakers” to Intelligent Multimodal Agents is built on three foundational advancements:

1. Large Multimodal Models (LMMs)

Modern models have evolved beyond basic text. They can now process parallel streams of information analyzing speech patterns, semantic content and emotional undertones simultaneously. This allows for “Barge-in” capabilities, where the AI can be interrupted and still maintain the thread of conversation, much like a human colleague.

2. Enterprise-Grade ASR and Neural Synthesis

Automatic Speech Recognition (ASR) has reached near-human accuracy, even in noisy industrial environments. Combined with neural speech synthesis that modulates pitch and pace based on context, AI interactions no longer feel like talking to a “walkie-talkie connected to a magic eight-ball.” They feel like an empathetic, knowledgeable companion.

3. Real-Time Visual Understanding

The integration of video takes this to another level. AI can now “see” through a digital lens, interpreting facial expressions, gestures, and environmental context. This eliminates the need for users to describe visual inputs with text, creating a seamless loop of natural interaction.

But Here’s the Real Battle: Who Owns Your Voice Data?

Most voice AI tools process conversations through third-party servers, which means your discussions leave your system and you lose control over sensitive information. Your data may be used to train external models, and valuable business intelligence can become exposed without you even realizing it. For enterprises, this creates a serious risk, especially when voice interactions include confidential decisions, customer conversations, or internal strategy.

This is why sovereign voice infrastructure is becoming the new battleground. Companies are now moving away from traditional setups and looking for more control, such as:

- Full ownership of voice and video data

- Private deployment inside their own infrastructure

- Secure processing without third-party exposure

- No data leakage outside the organization

This growing demand for control, privacy and trust is exactly where Altegon enters the picture, offering a sovereign voice AI approach designed for secure, real-time enterprise communication.

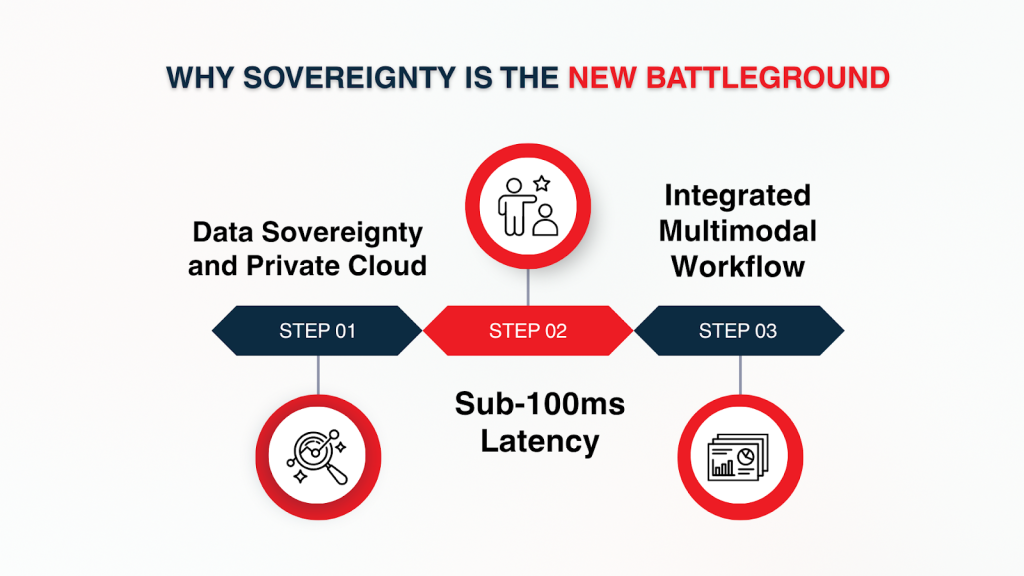

Why Sovereignty is the New Battleground

While many new challengers offer “wrappers” around public APIs, they fail at the most critical enterprise requirement: Sovereignty. If your voice data travels to a third-party server for processing, you have lost control of your most sensitive asset.

This is where Altegon defines the standard.

1. Data Sovereignty and Private Cloud

One of the biggest challenges with traditional voice AI is that your data often leaves your systems, passing through third-party servers. Altegon solves this by allowing enterprises to run voice and video pipelines entirely within their private infrastructure.

This approach ensures that:

- Conversations remain completely private, never leaving your secure environment.

- AI models are isolated, so your data doesn’t feed external systems or competitors.

- Sensitive business intelligence is protected, and your data is never used to train external models.

By keeping AI within your control, Altegon transforms a potential security risk into a strategic advantage allowing teams to leverage AI confidently for decision-making and collaboration. It’s the same logic driving the broader industry trend where IT Managers are pulling video hosting out of the cloud to regain control over their bandwidth and intellectual property.

2. Sub-100ms Latency

For AI to feel natural, it must respond almost instantly. Even slight delays can interrupt conversation flow, making interactions feel robotic. Altegon is optimized for ultra-low latency, meaning the sequence of Voice → Understanding → Decision → Response happens in under 100 milliseconds.

The result is an interaction that feels fluid, human-like, and immediate. When you speak, the AI listens, interprets and responds without hesitation. It’s less like using software and more like having a live assistant sitting right beside you, ready to act.

3. Integrated Multimodal Workflow

Altegon goes beyond listening and responding – it actively participates in enterprise workflows. Its AI agents can:

- Join meetings and follow the conversation in real time

- Take notes automatically, capturing key points

- Sync insights to CRM systems for actionable follow-up

- Update ERP platforms with operational data

- Recall previous discussions, even across sessions

- Execute tools and commands as needed

This shifts AI from being prompt-driven to agent-driven. You don’t just ask questions; you can assign tasks, delegate workflows and let the AI handle execution, all while maintaining control and security. By merging voice with visual context, we recognize that live video is the heartbeat of digital communication, providing the high-fidelity signal that text-only AI simply cannot match.

| Quick Insights: According to Gartner’s 2026 AI Infrastructure Report, 75% of enterprises will move to Sovereign AI by 2027 to eliminate the 500ms “latency tax” and data privacy risks inherent in consumer-grade assistants like Siri and Alexa. |

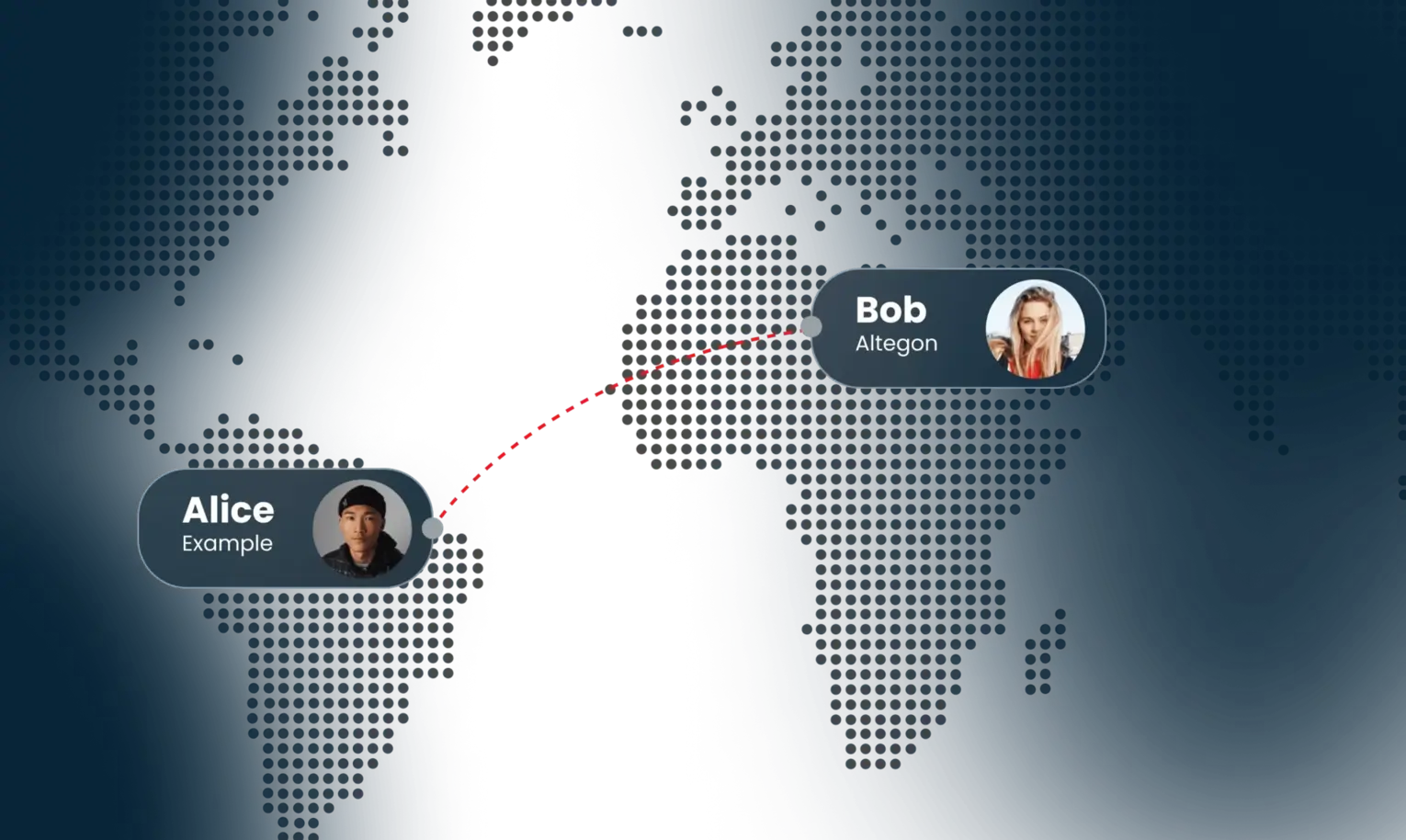

Real-World Example: Hosting Borderless Global Events

One global enterprise faced a huge challenge: they needed to host large-scale international events with thousands of participants across multiple countries. Each participant spoke different languages, had unique accents, and joined from regions with varying internet quality. High traffic spikes and real-time collaboration made traditional communication tools almost impossible to scale.

Using Altegon’s sovereign voice AI pipeline, these challenges were addressed seamlessly. The AI provided real-time language understanding, allowing participants to speak naturally without worrying about translation delays. Multi-party conversations were managed automatically, ensuring that discussions flowed smoothly even with hundreds of simultaneous speakers. Meanwhile, the AI note-taker captured all key points and synced summaries directly to enterprise tools, eliminating manual note-taking and reducing errors.

The result? Meetings became truly borderless and effortless. Teams collaborated across continents as if they were in the same room, without missing a beat. Translation delays, manual notes, and communication gaps were eliminated – making complex global events simple and efficient.

The Future of AI Is No Longer Text

The way we interact with AI is undergoing a fundamental shift:

- Typing → Talking: From slow text input to natural speech.

- Commands → Conversations: From rigid instructions to dynamic dialogue.

- Assistants → Agents: From reactive tools to proactive collaborators.

- Cloud AI → Sovereign AI: From public systems to fully controlled, secure infrastructure.

Companies that adopt voice-first intelligence will move faster, collaborate better, and automate more. Those still relying on legacy assistants or text-based interfaces will gradually fall behind.

Voice is no longer just a convenience. It is becoming the primary interface for intelligent systems, and the race for enterprise-ready, sovereign AI has only just begun.

Altegon is built for that future, enabling organizations to harness real-time voice and video intelligence while keeping control, security, and collaboration at the center.

Shaping Tomorrow’s Digital Interactions

The evolution from text to voice and video marks a fundamental shift in human-computer interaction. For developers and businesses, the learning curve is steep, but the rewards are transformative.

Those who rely on outdated, non-sovereign interfaces will be left behind. The future belongs to those who embrace Sovereign Voice AI technology built for the speed and security of business, not the limitations of the kitchen counter.

Altegon is ready to power that shift.